In This Article

- 1Why First-Party Data Stays Trapped in Your Warehouse

- 2The Three Activation Paths and How They Differ

- 3Google Ads: Enhanced Conversions, Customer Match, and the Data Manager API

- 4Meta: CAPI Architecture and Event Match Quality

- 5Programmatic: UID2, Clean Rooms, and DSP Audience Activation

- 6Cross-Channel Consistency: One Data Source, Three Activation Pipelines

- 7What Good Activation Looks Like in Production

Your customer data contains the answer to which acquisition profiles are worth paying more for. Purchase history, replenishment timing, email engagement, and category breadth all exist in your data warehouse. The ad platforms you are spending $50K to $300K per month on have access to none of it unless you build the pipeline to send it. Most brands have not built that pipeline.

First-party data activation is the process of moving that data from your warehouse into your ad platforms as live, structured signals that influence bidding decisions in real time. According to McKinsey research cited by S2W Media, businesses effectively using first-party data increase revenue by up to 15% while reducing marketing spend by 20%. Google's own Tag Gateway data shows a 14% uplift in conversion signals for advertisers who completed enhanced conversion infrastructure in 2025. These improvements do not come from creative. They come from better data reaching the auction.

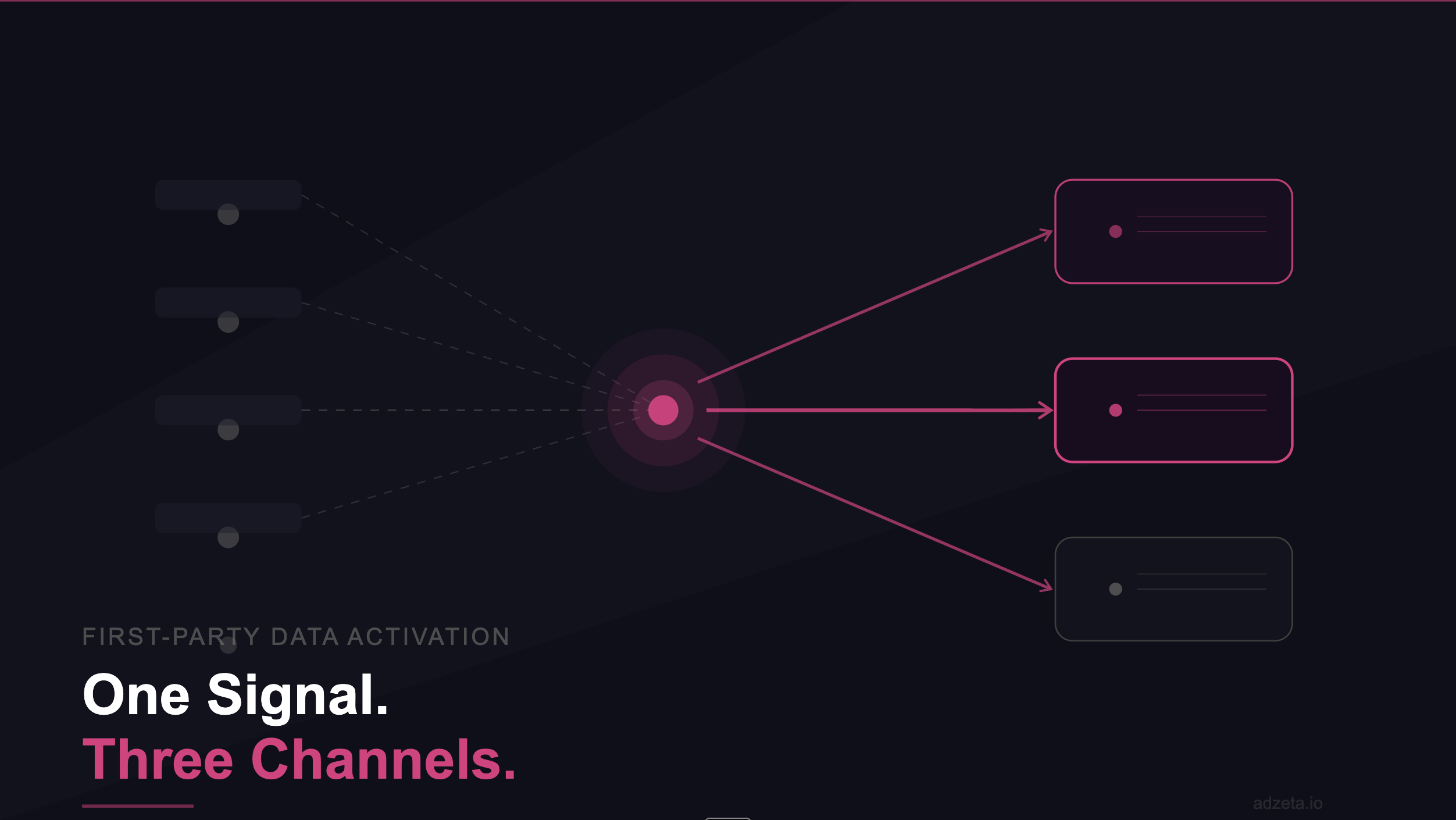

This guide covers the specific activation paths for Google, Meta, and programmatic DSPs: the technical interfaces, the signal types each channel accepts, the latency characteristics, and how to build a consistent first-party pipeline that works across all three simultaneously.

Why First-Party Data Stays Trapped in Your Warehouse

The gap between the data you own and the data your ad platforms receive is not a strategic oversight. It is an infrastructure problem. Most ecommerce brands have purchase history, CRM records, and behavioral signals in a data warehouse (BigQuery, Snowflake, or Redshift). Those systems were built for analytics and reporting. They were not built for real-time ad platform integration.

The default integration between an ecommerce store and an ad platform is a browser-based pixel. The pixel fires a JavaScript event when a qualifying action occurs on the user's device. Since Apple's App Tracking Transparency rollout in 2021, advertisers relying solely on the Pixel see 15-30% signal loss from iOS devices, compounding to 61-72% in some mobile configurations. The pixel fires in the browser and the browser increasingly blocks it.

Server-side activation closes this gap. Instead of relying on browser JavaScript, conversion events and customer signals flow from your server directly to ad platforms via API. First-party data delivers 2.9x revenue uplift versus third-party data, and 5-8x ROI on marketing spend, according to industry analysis. The performance differential is not from having better data. It is from having your data reach the auction at all.

The Three Activation Paths and How They Differ

Google, Meta, and programmatic DSPs accept first-party data through different technical interfaces, with different signal types, different latency characteristics, and different fidelity ceilings. Understanding the differences determines how you architect a cross-channel activation pipeline that does not require three separate engineering projects.

The core distinction is between walled garden platforms (Google and Meta), which accept first-party data via dedicated APIs and have strong identity matching infrastructure, and open programmatic DSPs, which accept first-party data via identity resolution frameworks like The Trade Desk's Unified ID 2.0 or LiveRamp's Authenticated Traffic Solution and process audience uploads in batch rather than real-time.

Google Ads: Enhanced Conversions, Customer Match, and the Data Manager API

Google accepts first-party data through three primary mechanisms. Enhanced Conversions sends hashed customer identifiers (email, phone, address) alongside conversion events, improving the accuracy of attribution by matching conversions to signed-in Google accounts. This is the baseline implementation that every brand spending on Google should have running.

Customer Match uploads hashed customer lists to Google and activates them as audience segments across Search, Shopping, YouTube, Gmail, and Display. For pLTV bidding, the critical use case is stratifying Customer Match lists by predicted LTV tier: top 20% by predicted 12-month value, mid-tier, and a suppression list for low-LTV profiles that should not receive competitive bids.

The most significant infrastructure development in 2025 was Google's Data Manager API, launched December 9, 2025. The API establishes a single ingestion point for first-party data across Google Ads, Google Analytics, and Display and Video 360, replacing the fragmented multi-API workflow most brands were using. For brands activating pLTV signals as conversion values, the Data Manager API consolidates what previously required separate implementations for each Google product.

Google Activation Priority Order

Start with Enhanced Conversions (fastest to implement, highest signal quality return). Layer in Customer Match segmented by pLTV tier. Then build toward Offline Conversion Import via the Data Manager API to pass pLTV scores as conversion values per event in sub-second latency.

Meta: CAPI Architecture and Event Match Quality

Meta's Conversions API is a server-to-server integration that sends conversion events directly from your server to Meta's Marketing API, bypassing browser restrictions entirely. Combined with the Meta Pixel (which still captures real-time browser events), CAPI provides redundant coverage that prevents signal loss from iOS restrictions, ad blockers, and Safari's Intelligent Tracking Prevention.

The operational metric that determines whether your CAPI implementation is actually improving performance is Event Match Quality (EMQ). Meta's EMQ scoring measures how accurately conversion events match to real Facebook users, on a scale of 0 to 10. Pixel-only implementations typically score EMQ 3-5. Adding hashed email and phone via CAPI moves it to 6-8. Adding IP address and user agent reaches 8-9. Adding a pLTV score as the conversion value alongside maximum identifiers reaches EMQ 9-10.

At EMQ 9-10 with pLTV conversion values, Meta's Andromeda algorithm has both the highest-fidelity user match and the most accurate optimization target simultaneously. Based on AdZeta client data, average ROAS at this configuration runs at 5.6x versus 2.9x for Pixel-only implementations. The gap compounds over time as Andromeda trains on progressively richer signal quality.

Programmatic: UID2, Clean Rooms, and DSP Audience Activation

Programmatic DSPs operate outside the walled gardens of Google and Meta. They require first-party data activation through identity resolution frameworks rather than proprietary APIs. The dominant standard for open programmatic is The Trade Desk's Unified ID 2.0 (UID2), which creates an encrypted, hashed identifier from a consented email address that can be used to target and measure audiences across the open web, CTV, and DOOH without relying on third-party cookies.

For LTV-based audience targeting in programmatic, the architecture involves exporting LTV-segmented customer lists from your warehouse, hashing email addresses to UID2 tokens, and uploading those tokens to your DSP as first-party audience segments. According to Adtelligent's 2026 DSP analysis, modern DSPs now rely almost entirely on first-party data activation via clean rooms and privacy-safe integrations for audience targeting as third-party cookies have degraded.

Data clean rooms enable the most sophisticated programmatic activation use case: combining your first-party customer LTV data with a publisher's or retailer's first-party data to identify your highest-value customer profiles in their audience, without either party exposing raw PII to the other. According to the 2025 State of Retail Media report, nearly two-thirds of organizations are already using clean rooms in some form. For brands spending on programmatic, this is the mechanism that allows LTV-based targeting at scale outside the Google and Meta ecosystems.

Cross-Channel Consistency: One Data Source, Three Activation Pipelines

The most common activation failure is maintaining separate first-party data pipelines for each channel. The Google team manages Customer Match uploads. The Meta team manages CAPI. The programmatic team manages DSP audience uploads. All three work from different data extracts at different cadences, producing inconsistent audience definitions across channels and a customer who receives contradictory bid treatment depending on which platform serves the impression.

The correct architecture unifies first-party data upstream before any channel-specific activation. A single customer record in your warehouse feeds a single pLTV scoring model, which produces a consistent customer value tier that is then distributed to Google via the Data Manager API, to Meta via CAPI, and to your DSP via UID2-encoded audience upload. The customer's predicted LTV tier is consistent across all three activation paths.

Unify customer records upstream

All purchase history, behavioral events, and customer identifiers consolidated in a single warehouse table. One source of truth before any channel-specific format conversion.

Run pLTV scoring against unified records

The ML model scores each customer record once. That score is the same number that flows to Google, Meta, and the DSP - not three separate scoring runs with potentially different outputs.

Format-convert for each channel's API

Google requires hashed SHA-256 email with GCLID. Meta CAPI requires hashed email, phone, IP, and user agent with event_id for deduplication. UID2 requires hashed email encoded via the UID2 Operator. Format conversion is the only channel-specific step.

Activate with consistent value tiers

High pLTV (top 20%): aggressive bid multiplier. Mid pLTV: baseline bid. Low pLTV: suppression or exclusion. These tiers should map to identical bid behavior across all three channels.

Validate with channel-specific match rate monitoring

Google: Customer Match match rate (target 40%+). Meta: EMQ score per event (target 7+). DSP: UID2 addressable reach. Different metrics, same underlying data quality question.

What Good Activation Looks Like in Production

A production first-party data activation system has three operational characteristics that distinguish it from a pilot or a one-time upload. First, it runs continuously. Customer LTV scores update as new behavioral signals arrive. The activation pipeline pushes updated audiences and conversion values to each channel without manual intervention. Second, it has latency appropriate to each channel: sub-second for Google and Meta API activations, daily batch for programmatic audience uploads.

Third, and most critical, it is auditable. Every bid modifier should trace back to a specific pLTV score, which traces back to specific features in the model. You should be able to answer "why did we bid $4.20 more for customer A than customer B?" with a specific answer derived from your data, not a black-box algorithm decision. Auditability is what separates an owned signal from a rented one.

AdZeta's Signal Engineering article covers the upstream feature engineering that produces audit-ready pLTV signals. The pLTV Google Ads implementation guide and Meta LTV bidding guide cover the channel-specific activation mechanics in detail.

Key Takeaways

- First-party data activation is three separate technical paths - Google Enhanced Conversions and Data Manager API, Meta CAPI, and DSP UID2/clean room audience uploads - each with different latency, fidelity, and implementation requirements.

- The 2025 Google Data Manager API consolidates first-party data uploads across Google Ads, Analytics, and DV360 into a single ingestion point, replacing the fragmented multi-API workflow most brands were running.

- EMQ score on Meta determines how many of your conversion events are used for optimization. Pixel-only averages EMQ 3-5. Full CAPI with pLTV conversion values reaches EMQ 9-10, corresponding to a 5.6x average ROAS in AdZeta client data.

- Programmatic DSP activation runs on batch latency (24-48 hours), making it best suited for LTV-tier audience suppression, remarketing, and prospecting rather than real-time bid adjustment.

- The correct architecture unifies first-party data upstream before any channel-specific activation. One pLTV score per customer, distributed consistently to Google, Meta, and DSP - not three separate pipelines producing inconsistent audience definitions.

For the foundational concepts behind pLTV modeling and why historical LTV analysis fails real-time acquisition decisions, see What Is Predictive Customer Lifetime Value (pLTV) and Why Every Growth Team Should Care.